If you think about websites as having different page types, with each page type having different sections within it, such as content sections, navigation sections, and footer sections, it becomes apparent that the value of a particular page is defined by the content on the page that is unique to that page. Sections like footers or site-wide navigation systems are repeated on each page, and give no specific extra value to that page.

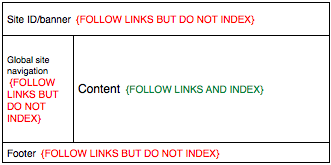

So, it would be helpful to be able to instruct search engine robots to not index specific areas of the web page. Here’s a wireframe of what I’m thinking.

How? Well, I haven’t found a real solution. Here’s an idea though.

We could extend XHTML with a schema that would include the ability to add attributes to elements like DIVs, ULs, OLs, Ps, and so on.

The attributes could be along these lines:

<div robot-follow=”yes” robot-index=”no”>Stuff you don’t want indexed here</div>

Of course, then the makers of the bots would need to program to heed these attributes.

So, yeah, all-in-all, a fairly impractical idea as nothing is implemented. However, if it were, I would use it on many websites.

3 responses to “Restricting search indexing to sections of a web page”

The search indexing attributes for a section of a web page feels too heavy. As (X)HTML should be for markup, javascript for behavior, and css for presentation, placing robot tags within element tags feels like the wrong place. My suggestion would be to use CSS-selectors within the robots.txt file as follows:

User-agent: *

#body .content {

index: yes;

follow: yes;

}

#body .nav {

index: no;

follow: yes;

}

Even though XHTML is eXtensible, adding attributes that do not apply to “content” or markup of content, seems like the wrong place. What are your thoughts?

Good point Jared. It would be great to be able to use selectors in robots.txt like you’ve illustrated.

However, as is, there can only be 1 robots.txt file for the site. This could cause a problem with multiple types of pages in a single site. Maybe if you could prefix the file path in the selector, like:

/path/to/file.html #body .content{

index: yes;

follow: yes;

}

However, then you’d have to do that for every file. Maybe if you could use a wildcard, like:

/path/to/*.html #body .content

A parallel, but page-specific approach would be to add meta-tags to the head of the document. This, I think, does follow the spirit of XHTML in that the head section contains meta-data. There already is a robots meta tag, so maybe that could be extended.

The current usage looks like:

<meta name=”ROBOTS” content=”NOINDEX, NOFOLLOW” />

Maybe a revision could look like:

<meta name=”ROBOTS” content=”noindex: #body .nav, #footer; nofollow: #footer” />

That kind of meta information could be added to template systems, like in Dreamweaver, and thus be included per type of page.

Here’s a post from Google on using rel=”nofollow”.

http://googleblog.blogspot.com/2005/01/preventing-comment-spam.html